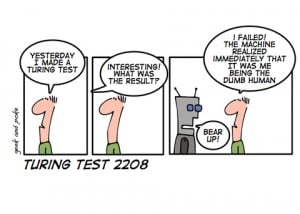

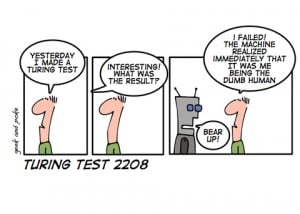

News that a chat-bot named Eugene Goostman passed the Turing test last week has been making the rounds in technical circles. As you probably know, the Turing test poses the question, “Can a computer fool humans into thinking it is human?” The Turing test is supposed to be a key benchmark of progress toward artificial intelligence (AI). Being able to hold a genuine conversation and sound like a peer is a key component of sentience. But it is enough to constitute sentience in and of itself? As is often the case with startling-looking announcements of technical achievements, peering beyond the headline adds details which make clear the matter of AI is vastly more complex than a chat program fooling a human into thinking it is human.

News that a chat-bot named Eugene Goostman passed the Turing test last week has been making the rounds in technical circles. As you probably know, the Turing test poses the question, “Can a computer fool humans into thinking it is human?” The Turing test is supposed to be a key benchmark of progress toward artificial intelligence (AI). Being able to hold a genuine conversation and sound like a peer is a key component of sentience. But it is enough to constitute sentience in and of itself? As is often the case with startling-looking announcements of technical achievements, peering beyond the headline adds details which make clear the matter of AI is vastly more complex than a chat program fooling a human into thinking it is human.

For the record, I have been a computer user and hobbyist since the late 1970s, and no, I don’t believe Eugene Goostman is anything even remotely comparable to a HAL-9000 or Skynet (a genuine, if for now fictional, AI). An examination of the chat transcripts revealed that Goostman didn’t truly engage its partner in a manner demonstrating understanding of the material being discussed. Rather, it adhered—barely, at times—to syntactical and grammatical conventions of the English language. And this is what we expect of a computer: it follows the rules humans establish, but it has no understanding of the reasons behind those rules, or of anything beyond those rules.

But let’s play devil’s advocate for a moment. Perhaps we are giving humans a little too much credit. Humans are sentient, yes, but how many conversations do we participate in without really using our sentience and intelligence? For example, how many cups of coffee do you have to have Monday morning before you could shake the sleep out of your brain well enough to pass a Turing test? When you swing by Starbucks at 7:20 in the morning, still yearning for the pillow you left behind, are you going to use your brilliant sentience…or just adhere to the syntactical and grammatical conventions required to make your desire for a latte with extra cream known? Odds are you’re not going to engage the barista in a discussion of Shakespeare or even which Star Trek captain was the best. You just want your coffee, darn it!

But let’s play devil’s advocate for a moment. Perhaps we are giving humans a little too much credit. Humans are sentient, yes, but how many conversations do we participate in without really using our sentience and intelligence? For example, how many cups of coffee do you have to have Monday morning before you could shake the sleep out of your brain well enough to pass a Turing test? When you swing by Starbucks at 7:20 in the morning, still yearning for the pillow you left behind, are you going to use your brilliant sentience…or just adhere to the syntactical and grammatical conventions required to make your desire for a latte with extra cream known? Odds are you’re not going to engage the barista in a discussion of Shakespeare or even which Star Trek captain was the best. You just want your coffee, darn it!

This scenario is an example of the “good enough” principle. We see variations on this theme all the time. Technically, vinyl records can have a higher level of fidelity than CDs. Old-fashioned film has a warmth and coloration that digital photography can’t quite compete with on a purely qualitative level. Yet the CD and digital download and digital photography have dominated these media for quite some time. Why? Because they’re good enough for the vast majority of users. They’re not the best, but they work, and economically so.

What does “good enough” have to do with Turing tests and AI? I don’t think we’re going to have a single “Terminator” moment when a computer or network of computers wakes up and becomes sentient and we achieve a true strong AI. What we’re more likely to see over the next half-century or so is computers pass through a progressive range of “good enough” intelligence or other benchmarks to perform certain tasks adequately…and as time goes on, those tasks will require more and more of what we could call “intelligence.” For example, last century computers became “good enough” to assist people with routine bank transactions, and the ATM was born. This century, computers became good enough to handle the vending and return of DVDs. Computers are on the cusp of becoming good enough to operate motor vehicles more reliably than humans in real-world conditions, a benchmark that will likely be checked off by the end of this decade, if not sooner. As much as I loved 20th century science fiction, where humans explored space, by the 2020s robots are going to be “good enough” to handle delicate objects, make semi-autonomous decisions, and explore the solar system; the prototypes are already in service. The pilot of the first interstellar probe will almost certainly be a robot, heroic movies notwithstanding. And so it will progress, with robots handling more and more work currently done by humans, until at some point we come to agree they are “intelligent” in some sense.

Here in 2014, however, robots have not yet mastered the art of conversation, empathy, or emotion. And if you are a business with customers to support, you don’t want a Eugene Goostman picking up your phone or answering emails when your users try and reach you to ask questions about your new gadget or SAAS product. It’s going to be a long time before a robot can impart understanding and the correct empathetic responses and reactions for a frustrated customer. This is where Metaverse comes in, with solutions to your help desk needs, and live representatives who certainly can pass a Turing test and provide the friendly, knowledgeable, human touch we all want when we have a problem and seek help. Just let us know how WE can help!

Benjamin Stockton

Project Manager

This entry was posted in

Best Practices,

Community,

Customer Support,

Digital Engagement,

Social Media and tagged

AI,

artificial intelligence,

clever,

cleverness,

Computer,

conversation,

Customer Service,

customer support,

custserv,

Empathy,

eugene goostman,

helpdesk,

human,

humanity,

intelligence,

news,

Robots,

sentience,

support,

turing,

turing test by

ModSquad. Bookmark the

permalink.

The mods of Metaverse Mod Squad are the superheroes of the Internet. No, we can’t fly (yet), but just as Batman protects the citizens of Gotham, and Superman guards Metropolis, our mods watch over some of your favorite online communities.

The mods of Metaverse Mod Squad are the superheroes of the Internet. No, we can’t fly (yet), but just as Batman protects the citizens of Gotham, and Superman guards Metropolis, our mods watch over some of your favorite online communities.